PostgreSQL to Snowflake Using OpenFlow

Pooja Peddapally

1. Executive Summary

- Problem: Organizations often struggle to move operational PostgreSQL data into Snowflake efficiently while maintaining freshness and reliability.

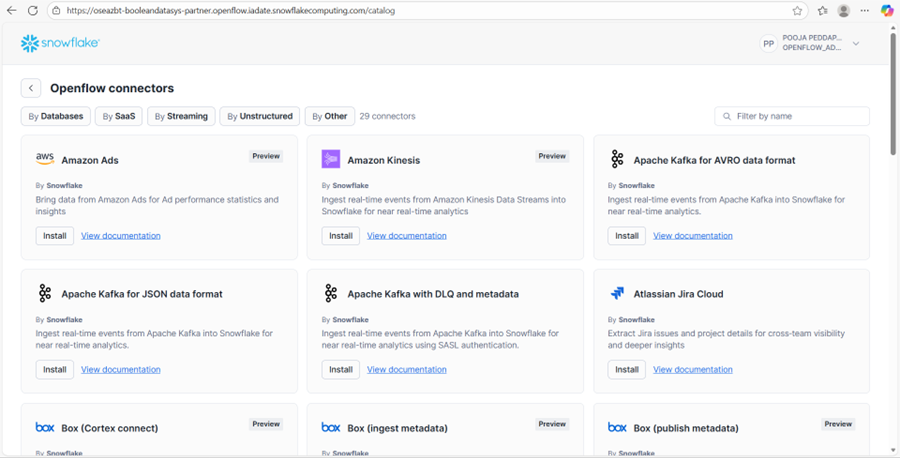

- Recommended Approach: Use Snowflake OpenFlow with the PostgreSQL connector to capture database changes and ingest them into Snowflake.

- Where it fits: Operational analytics, reporting pipelines, and real-time data synchronization use cases.

- Key outcomes: Simplified ingestion architecture, low latency replication, improved pipeline reliability, and reduced operational overhead.

- What the reader can implement: A practical PostgreSQL → Snowflake ingestion pipeline using OpenFlow with logical replication.

2. Background

Modern organizations typically separate operational databases from analytical systems. PostgreSQL is commonly used to support transactional workloads, while Snowflake is designed for large-scale analytics and reporting.

To enable analytics, operational data must be continuously ingested into the data warehouse. Traditional ETL pipelines often require multiple tools, custom scripts, and orchestration frameworks. These pipelines can become difficult to maintain as data volume grows.

Snowflake OpenFlow simplifies this process by providing a native data ingestion framework capable of capturing changes from external systems and loading them directly into Snowflake.

3. Problem

3.1 Symptoms

- Data pipelines require multiple ETL tools and complex orchestration.

- Operational data arrives in Snowflake with significant delays.

- Pipeline failures require manual intervention and debugging.

3.2 Impact

Delayed data ingestion reduces the effectiveness of dashboards, reporting, and analytics. Data engineering teams also spend significant time maintaining ETL pipelines instead of building new data products. A scalable and reliable ingestion framework becomes necessary as data systems grow.

4. Requirements & Assumptions

4.1 Data & SLA

- Data Volume: Millions of rows per month depending on application workload

- Freshness/SLA: Near real-time or frequent ingestion

- Environments: Development, staging, and production

4.2 Security & Compliance

- Data Sensitivity: Typically non-PII operational data, though sensitive fields may exist

- Access Model: Snowflake role-based access control (RBAC)

4.3 Tooling & Constraints

- Ingestion tool: Snowflake OpenFlow

- Source database: PostgreSQL hosted on AWS RDS

- Constraints: Network connectivity, logical replication configuration, and schema evolution handling

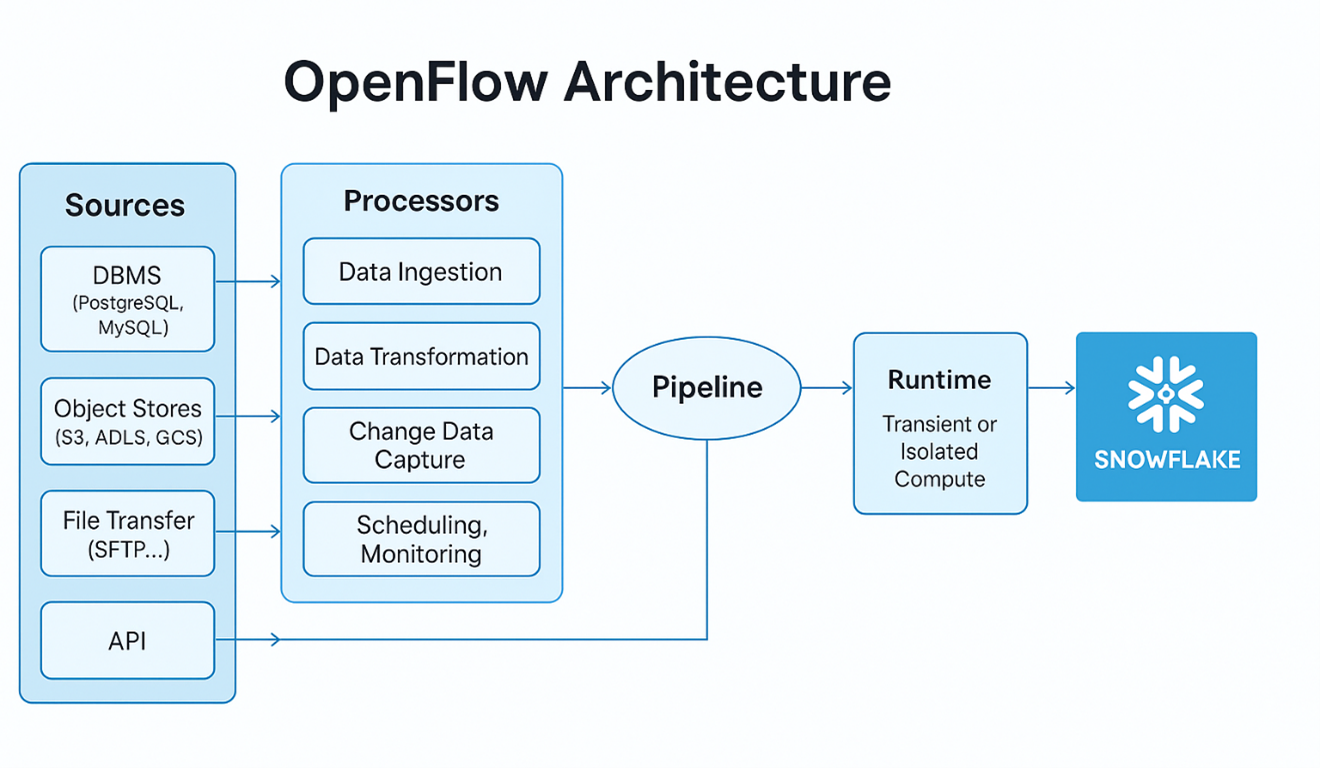

5. Recommended Architecture

5.1 High-Level Flow

- PostgreSQL stores operational data.

- Logical replication captures database changes.

- OpenFlow runtime connects to PostgreSQL.

- PostgreSQL connector reads table changes.

- Processors transform and stream the data.

- Data is ingested into Snowflake tables.

- Snowflake enables analytics and reporting.

5.2 Architecture Diagram

PostgreSQL (RDS) → OpenFlow Runtime → Processors → Snowflake Tables → BI / Analytics

5.3 Options

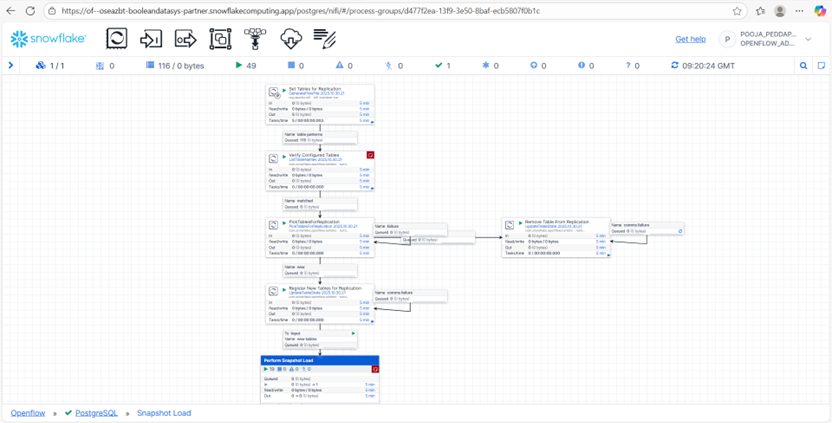

Option A: Snapshot Ingestion

- Full table extraction

- Used for initial data loads

Option B: CDC Ingestion

- Change Data Capture using logical replication

- Used for continuous updates

Selection Guide

- Snapshot ingestion is suitable for initial migrations

- CDC ingestion is preferred for ongoing data synchronization

6. Implementation

6.1 Setup

Snowflake environment setup includes:

- Creating database and schema

- Configuring warehouse for processing

- Creating roles for OpenFlow access

- Configuring network rules and external access integrations

External configuration includes:

- PostgreSQL database running on AWS RDS

- Logical replication enabled

- Network connectivity between Snowflake and PostgreSQL

6.2 Core Build Steps

- Configure PostgreSQL logical replication settings.

- Deploy OpenFlow runtime and PostgreSQL connector.

- Configure connection parameters (host, port, credentials).

- Select tables to replicate.

- Enable processors and validate the data pipeline.

6.3 Configuration Defaults

- Watermark Strategy: CDC via PostgreSQL replication logs

- Deduplication Key: Primary key of source tables

- Load Frequency: Continuous ingestion

- Error Handling: Connector retries and runtime monitoring

7. Validation & Testing

7.1 Data Validation

Row Count Validation

Compare row counts between PostgreSQL and Snowflake tables.

Example:

SELECT COUNT(*) FROM postgres_table;

SELECT COUNT(*) FROM snowflake_table;Duplicate Checks

SELECT id, COUNT(*)

FROM snowflake_table

GROUP BY id

HAVING COUNT(*) > 1;Freshness Checks

Verify the latest ingestion timestamp matches the source database.

7.2 Reconciliation

Periodic reconciliation can be performed by comparing:

- Row counts

- Primary key distributions

- Update timestamps

8. Security & Access

- Required Snowflake permissions: Database usage, schema access, and table creation privileges

- Secret management: Credentials stored securely within Snowflake integrations

- Separation of duties: Data engineers configure pipelines while analysts consume data

- Auditability: Snowflake query history and runtime logs provide monitoring and traceability

9. Performance & Cost

9.1 Performance Considerations

- Use appropriate warehouse sizes depending on ingestion volume

- Monitor runtime performance and connector throughput

9.2 Cost Drivers

- Compute cost from Snowflake warehouses

- Storage cost for ingested data

- Data transfer costs if cross-region connections are used

9.3 Cost Controls

- Configure auto-suspend warehouses

- Use resource monitors

- Optimize ingestion batch sizes

10. Operations & Monitoring

10.1 What to Monitor

- Pipeline success or failure rate

- Data freshness

- Unexpected changes in data volume

- Runtime health and connector status

10.2 Alerting

Alerts can notify administrators when pipelines fail or ingestion latency increases.

10.3 Runbook

Common issues:

Driver error → Upload correct PostgreSQL driver

Permission error → Reapply Snowflake role privileges

Connection failure → Verify network rules and security groups

11. Common Pitfalls

- Missing Snowflake privileges for OpenFlow runtime

- Incorrect PostgreSQL replication settings

- Network connectivity issues

- Large initial snapshot loads

- Schema changes breaking ingestion pipelines

12. Variations / Use Cases

- PostgreSQL CDC pipelines for operational analytics

- API ingestion pipelines using OpenFlow

- Real-time analytics dashboards

- Machine learning pipelines requiring fresh operational data

13. Next Steps

Organizations implementing Snowflake ingestion pipelines should:

- Evaluate CDC strategies for operational databases

- Implement monitoring and alerting

- Optimize warehouse sizing for ingestion workloads

Pooja Peddapally

Associate Data Engineer

Boolean Data Systems

Aspiring Associate Data Engineer with hands-on experience in designing and supporting cloud-based data pipelines and analytics workflows. Skilled in building scalable data solutions, streamlining data processing, and enabling meaningful business insights. Passionate about modern data engineering, efficient data systems, and scalable cloud architectures.

About Boolean Data

Systems

Boolean Data Systems is a Snowflake Select Services partner that implements solutions on cloud platforms. we help enterprises make better business decisions with data and solve real-world business analytics and data problems.

Services and

Offerings

Solutions &

Accelerators

Global

Head Quarters

USA - Atlanta

3970 Old Milton Parkway,

Suite #200, Alpharetta, GA 30005

Ph. : 770-410-7770

Fax : 855-414-2865